This week, the White House Office of Science and Technology Policy (OSTP) released the first updates to their National AI R&D Strategic Plan since 2019, emphasizing a focus on developing “trustworthy AI” while calling for public input on next steps.

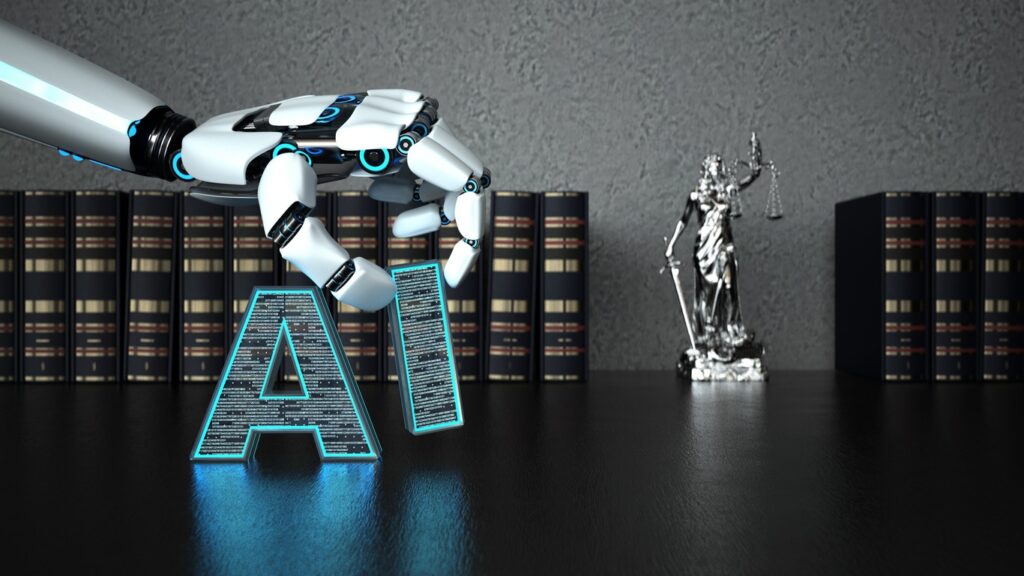

The new plan was announced alongside a report from the education department that emphasizes the potential rewards of AI (in both academia and the public realm) but ”underscores the risks associated with AI—including algorithmic bias—and the importance of trust, safety and appropriate guardrails,” the study shows.

What makes both of these announcements so pivotal is that they land at a time when AI technologists are in the midst of debating the merits of artificial intelligence with lawmakers in the Senate. While those discussions have prompted some leaders—both in the public and private sector—to call for an all-out halt to AI adoption, the revised Strategic Plan makes it clear that the White House is moving full steam ahead in embracing AI.

“Developed by experts across the federal government and with public input, this plan makes clear that when it comes to AI, the federal government will invest in R&D that promotes responsible American innovation, serves the public good, protects people’s rights and safety, and upholds democratic values,” the release reads. “It will help ensure continued US leadership in the development and use of trustworthy AI systems.”

9 Strategies for AI R&D Investment

The plan outlines nine strategies that will dictate the OSTP’s approach to supporting the development of new AI technologies in the US, building on the eight strategies originally outlined in both 2016 and 2019 editions of the report. These new strategies include a focus on international collaboration, emphasizing the need for cross-border cooperation in sharing knowledge and best practices.

Specifically, the strategies breakdown as follows:

- Make long-term research investments: This first strategy alludes heavily to the rise of generative AI (ie. ChatGPT) and the need for greater understanding about the implications of these tools as their capabilities increase. Along with supporting research into how certain generative AI models learn, the plan calls for “focused efforts to make AI easier to use and more reliable and to measure and manage risk.”

- Explore human-AI collaboration: This next point also goes to addressing the risk of misuse, calling for the need to “develop effective methods” to track the “efficiency, effectiveness and performance of AI-teaming applications” and open research into the attributes of successful human-AI teams.

- Focus on AI’s ethical, legal and societal impact: At the heart of this strategy is to develop systems that ensure AI can behave in ways that “reflect our Nation’s values and promote equity.” This point also specifically calls for frameworks that promote fairness and prevent bias.

- Make AI safe and secure: This strategy is a call for systems that can test certain AI to vet their functionality and security while flagging for any data vulnerabilities.

- Create public AI training and testing environments: Developing a diverse research community around AI development by open-sourcing high quality AI datasets is the best path to avoiding bias, the OSTP explains, while increasing the potential for innovation.

- Develop (and enforce) AI standards: Using the Biden Administrations’ Blueprint for an AI Bill of RIghts and AI Risk Management Frameworks as a starting point, the plan calls for the adoption of broad-spectrum standards to dictate the use of AI in the government and in the field.

- Engage with the AI R&D workforce: The plan also recognizes that there is huge potential for job creation in the realm of AI R&D, and calls for workforce development practices to foster new opportunities.

- Promote public-private partnerships: The OSTP also plans to continue engagement with “academia, industry, international partners and other non-federal entities” to explore investment opportunities that will accelerate the practical use of AI in all fields.

- Coordinate across borders, too: In a similar vein to the last point, the OSTP emphasizes that AI development shouldn’t be siloed off if we want to enjoy the greatest innovations (and avoid the greatest risks). This includes using AI to “address global challenges, such as environmental sustainability, healthcare, and manufacturing.”

In both the government and in the business world, AI is more than just a discussion topic: It’s already benign deployed and evolving before our very eyes. The more that governments can play a hand in both regulating and helping fund AI R&D, the better, as it will help ensure that AI development isn’t happening in siloes—limiting the potential for data bias and some of the darkest risks the technology poses.

To learn more about how teams can leverage new AI and machine learning solutions to fuel their R&D, check out our recent LinkedIn Live panel, “AI Regulation: Rules for using AI to drive innovation.”