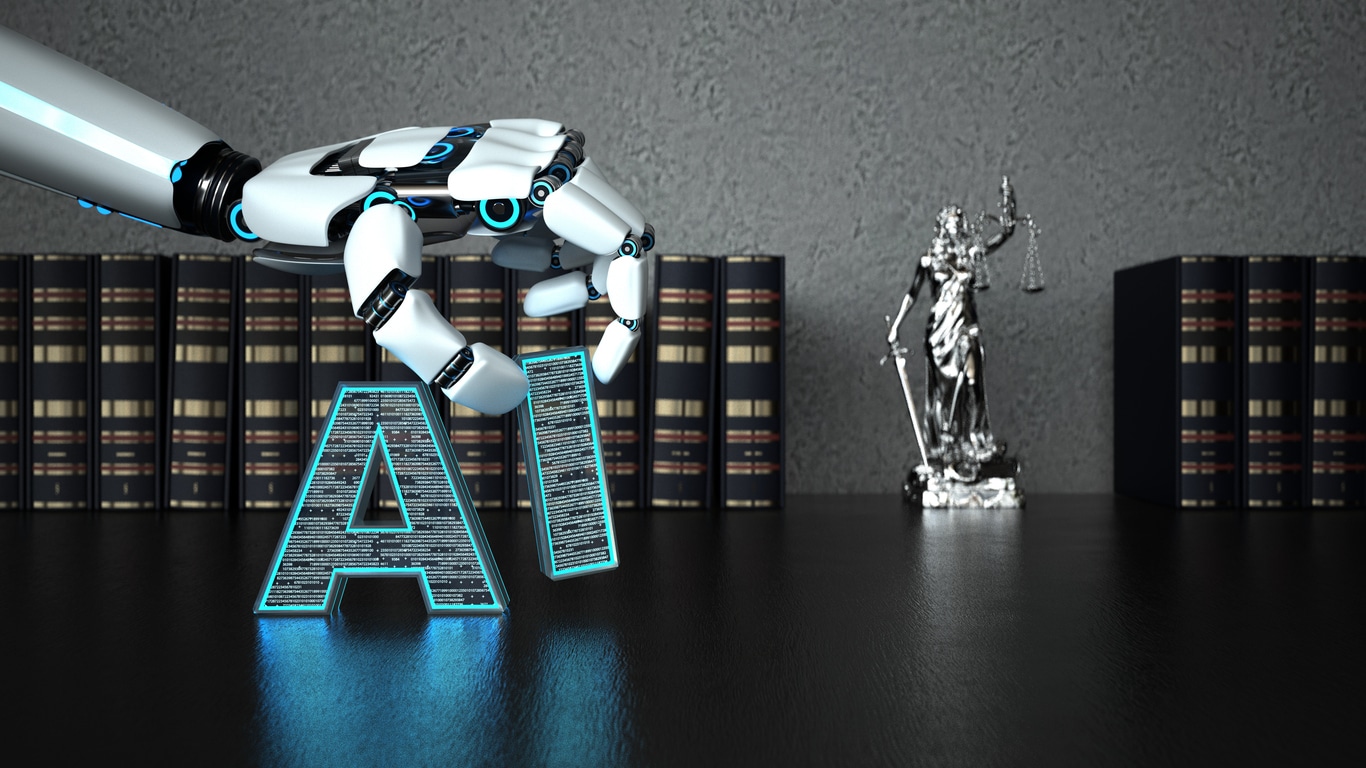

Officials from the United States and United Kingdom announced a landmark agreement this week to formally cooperate on testing and assessing the risks of artificial intelligence (AI).

You’d be forgiven for thinking that the latest news around politicians coming together—which landed on 4/1—was an April Fool’s joke.

But given the rapid growth of AI in virtually every business sector and industry, alongside the rise of AI businesses within both the US and UK, it’s actually more surprising that such an agreement hadn’t been formalized earlier.

Signed by US commerce secretary Gina Raimondo and UK science minister Michelle Donelan, this agreement lays the groundwork for how the two governments will pool expertise and technical talent to put guardrails on this rapidly-evolving tech arena.

“The U.K. and the United States have always been clear that ensuring the safe development of AI is a shared global issue,” said Secretary Raimondo in a press release. “Reflecting the importance of ongoing international collaboration, today’s announcement will also see both countries sharing vital information about the capabilities and risks associated with AI models and systems, as well as fundamental technical research on AI safety and security.”

The announcement follows the establishment of AI Safety Institutes (AISIs) established back in November for both the US and UK, which will see “secondments of researchers” from both countries as well as an exchange of data from private sector participants. Private AI models built by the likes of OpenAI and Google, for instance, as well as published reports from Anthropic and others that detail how safety tests inform product development, will all be open to vetting by the new AISIs under the agreement.

While this partnership is a new frontier for AI, it’s modeled on existing collaborations between the NSA in the US and the UK’s Government Communications Headquarters (GCHQ), which have worked closely together for decades on national and global security issues.

So the big question remains: What will this mean for private businesses, or even non-US- or UK-based companies dealing with AI?

Developing a “common approach” for testing AI safety

The safety tests developed by the US and UK as part of their AISI collaboration will inevitably have a global impact, as many leading AI businesses were born or are based in the United States before gaining global attention.

That’s not to say that these are the only major economies hoping to put safeguards in place around nascent AI.

While the European Union’s AI Act and U.S. President Joe Biden’s executive order on AI both came out last year, pressuring businesses to disclose the results of safety tests, Canada has also drafted guidelines around the responsible use of AI in government that have laid the groundwork for research into private sector AI locally.

Canada also has both the US and UK beat in finalizing data protections around AI dating back to September 2023—to say nothing of the fact that Canada has the third largest number of AI researchers and investments into new AI companies in the world.

In fact, recent research from EDUCanada shows that more than 35,000 innovative jobs in the field of AI and machine learning will be coming online over the next 5 years, as major cities across Canada—namely Toronto, Vancouver, Montreal and Ottawa—all rank highly in CBRE’s top talent markets in North America.

All of that is to say that businesses on both sides of the border are going to be impacted by the work being done by the US and UK AISIs—and they should seize the opportunity to turn these “safeguards” into truly unique innovation.

Using R&D to drive safer AI (and a stronger capital strategy)

While the lack of regulation around AI today can be scary, it presents an opportunity for new businesses to “stake their claim” on the new markets around AI safety that are poised to emerge as more governments partner to understand safe AI deployments.

In that same vein, governments will continue to prioritize innovation funding programs like R&D tax credits or research grants toward businesses in fields where innovation isn’t just an opportunity, but an imperative—as has become the case with AI.

If you’re an AI business, whether you’re working in the US or Canada, there are a wealth of non-dilutive funding opportunities that can help you cover the costs of R&D that’s driving groundbreaking innovation, while carving out a unique space in this growing market.

Despite there being more than more than $20 billion in R&D tax credits available in North America today, only about 5 percent of eligible businesses (that is 1 out of 20) are tapping into this readily-available resource.

A partner for financing innovative R&D

At Boast, our tech industry experts are among the most talented in North America, leveraging a knowledge of both government tax code and technology to truly speak your business’ “language of innovation.”

This makes the process of communicating the unique and valuable work your R&D teams do every day easy, significantly streamlining the time it takes to create a compelling R&D tax credit or grant claim compared to executing in-house or even working with an accounting firm.

The results? Teams that work with Boast save upwards of 60 hours on average working with our team, who deliver 35 percent more accurate claims on average.

To learn more about how our team can help you stretch your investments into R&D further, talk to an expert today.

US & UK AI Safety Regulation FAQ

- What did the US and UK announce regarding AI safety? The two countries announced a landmark agreement to formally cooperate on testing and assessing the risks associated with artificial intelligence systems through newly established AI Safety Institutes (AISIs).

- How will the partnership work? The AISIs will facilitate the exchange of technical researchers, data from private AI models, and research reports between the US and UK. This collaboration aims to develop common approaches to evaluating AI safety.

- Why is this partnership important for businesses? As leading AI companies are based in the US, the safety standards and testing methods developed through this partnership will likely have a global impact and influence AI regulations in other countries as well.

- How can AI businesses capitalize on this? Rather than viewing increased safety scrutiny as a hindrance, AI companies can seize the opportunity to drive innovation in AI safety itself and establish expertise in this emerging field through dedicated R&D efforts.

- How can R&D funding support AI safety innovation? Government innovation funding programs like R&D tax credits and research grants are expected to prioritize AI safety as an area driving valuable technological advancements. Companies can leverage these non-dilutive funds to finance their AI safety R&D efforts.